During the 20th century, inventors created devices that the public regularly depended upon. Arguably, one of the most important inventions was the transistor. Developed in 1947 by engineers working for Bell Laboratories, the original purpose of the transistor was to amplify sound over phone lines. The transistor replaced an older technology — vacuum tubes. The tubes weren't reliable, were bulky and generated a lot of heat, too.

The first transistor was a point-contact transistor that measured half an inch (1.27 centimeters) in height. The transistor wasn't very powerful, but physicists recognized the potential of the device. Before long, physicists and engineers began to incorporate transistors into various electronic devices. And as time passed, they also learned how to make transistors smaller and more efficient.

Advertisement

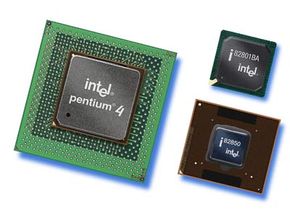

In 1958, engineers attached two transistors to a silicon crystal and created the world's first integrated circuit [source: Intel]. In turn, the integrated circuit paved the way for the development of the microprocessor. If you compare a computer to a human being, the microprocessor would be the brain. It makes calculations and processes data.

By the 1960s, computer scientist (and Intel co-founder) Gordon Moore made an interesting observation: He noticed that every 12 months, engineers were able to double the number of transistors on a square-inch piece of silicon. Like clockwork, engineers were finding ways to reduce the size of transistors. It's because of these small transistors that we have electronic devices like personal computers, smartphones and mp3 players. Without transistors, we would still be using vacuum tubes and mechanical switches to make calculations.

Since Moore's observation, the shrinking trend has continued. But it hasn't kept up with the pace Moore observed. These days, the number of transistors doubles every 24 months. But that raises an interesting question: How small can transistors — and by extension, CPUs — get? In 1947, a single transistor measured a little over one-hundredth of a meter high. By the 2010s, Intel produced microprocessors with transistors measuring only 45 nanometers wide. A nanometer is one-billionth of a meter!

Intel and other microprocessor manufacturers are already working on the next generation of chips. These will use transistors measuring a mere 32 nanometers in width. But some physicists and engineers think we might be bumping up against some fundamental physical limits when it comes to transistor size.

Advertisement