Take the number two and double it and you've got four. Double it again and you've got eight. Continue this trend of doubling the previous product and within 10 rounds you're up to 1,024. By 20 rounds you've hit 1,048,576. This is called exponential growth. It's the principle behind one of the most important concepts in the evolution of electronics.

Advertisement

In 1965, Intel co-founder Gordon Moore made an observation that has since dictated the direction of the semiconductor industry. Moore noted that the density of transistors on a chip doubled every year. That meant that every 12 months, chip manufacturers were finding ways to shrink transistor sizes so that twice as many could fit on a chip substrate.

Moore pointed out that the density of transistors on a chip and the cost of manufacturing chips were tied together. But the media -- and just about everybody else -- latched on to the idea that the microchip industry was developing at an exponential rate. Moore's observations and predictions morphed into a concept we call Moore's Law.

Over the years, people have tweaked Moore's Law to fit the parameters of chip development. At one point, the length of time between doubling the number of transistors on a chip increased to 18 months. Today, it's more like two years. That's still an impressive achievement considering that today's top microprocessors contain more than a billion transistors on a single chip.

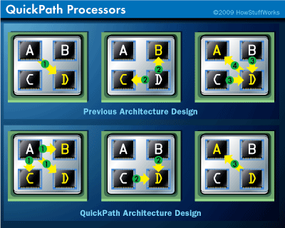

Another way to look at Moore's Law is to say that the processing power of a microchip doubles in capacity every two years. That's almost the same as saying the number of transistors doubles -- microprocessors draw processing power from transistors. But another way to boost processor power is to find new ways to design chips so that they're more efficient.

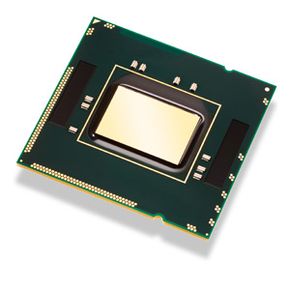

This brings us back to Intel. Intel's philosophy is to follow a tick-tock strategy. The tick refers to creating new methods of building smaller transistors. The tock refers to maximizing the microprocessor's power and speed. The most recent Intel tick chip to hit the market (at the time of this writing) is the Penryn chip, which has transistors on the 45-nanometer scale. A nanometer is one-billionth the size of a meter -- to put that in the proper perspective, an average human hair is about 100,000 nanometers in diameter.

So what's the tock? That would be the new Core i7 microprocessor from Intel. It has transistors the same size as the Penryn's, but uses Intel's new Nehalem microarchitecture to increase power and speed. By following this tick-tock philosophy, Intel hopes to stay on target to meet the expectations of Moore's Law for several more years.

How does the Nehalem microprocessor use the same-sized transistors as the Penryn and yet get better results? Let's take a closer look at the microprocessor.

Advertisement