To call the evolution of the computer meteoric seems like an understatement. Consider Moore's Law, an observation that Gordon Moore made back in 1965. He noticed that the number of transistors engineers could cram onto a silicon chip doubled every year or so. That manic pace slowed over the years to a slightly more modest 24-month cycle.

Awareness of the breakneck speed at which computer technology develops has seeped into the public consciousness. We've all heard the joke about buying a computer at the store only to find out it's obsolete by the time you get home. What will the future hold for computers?

Advertisement

Assuming microprocessor manufacturers can continue to live up to Moore's Law, the processing power of our computers should double every two years. That would mean computers 100 years from now would be 1,125,899,906,842,624 times more powerful than the current models. That's hard to imagine.

But even Gordon Moore would caution against assuming Moore's Law will hold out that long. In 2005, Moore said that as transistors reach the atomic scale we may encounter fundamental barriers we can't cross [source: Dubash]. At that point, we won't be able to cram more transistors in the same amount of space.

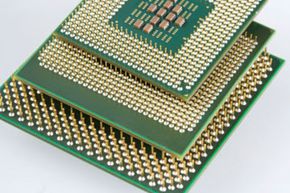

We may get around that barrier by building larger processor chips with more transistors. But transistors generate heat, and a hot processor can cause a computer to shut down. Computers with fast processors need efficient cooling systems to avoid overheating. The larger the processor chip, the more heat the computer will generate when working at full speed.

Another tactic is to switch to multi-core architecture. A multi-core processor dedicates part of its processing power to each core. They're good at handling calculations that can be broken down into smaller components; however, they aren't as good at handling large computational problems that can't be broken down.

Future computers may rely on a completely different model than traditional machines. What if we abandon the old transistor-based processor?

Advertisement