With each step forward in the digital revolution, "The Matrix" becomes less like fiction and more like reality. That's in part because hardware engineers and software developers continue to refine AR technology. But, what is augmented reality?

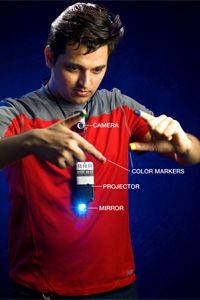

Augmented reality is a form of mixed reality that blends of interactive digital elements – like dazzling visual overlays, buzzy haptic feedback, or other sensory projections – into our real-world environments. If you experienced the hubbub of Pokemon Go, you witnessed augmented reality in action.

Advertisement

This (once incredibly popular) mobile game allowed users to view the world around them through their smartphone cameras while projecting game items, including onscreen icons, score, and ever-elusive Pokemon creatures, as overlays that made them seem as if those items were right in your real-life neighborhood. The game's design was so immersive that it sent millions of kids and adults alike walking (and absentmindedly stumbling) through their real-world backyards in search of virtual prizes.

Google SkyMap is another well-known AR app. It overlays information about constellations, planets and more as you point the camera of your smartphone or tablet toward the heavens. Wikitude is an app that looks up information about a landmark or object by your simply pointing at it using your smartphone's camera. Need help visualizing new furniture in your living room? The IKEA Place app will provide an overlay of a new couch for that space before you buy it so that you can make sure it fits [source: Marr]

But augmented reality technology extends far beyond our smartphones. It's a technology that finds uses in more serious matters, from business to warfare to medicine.

The U.S. Army, for example, uses AR devices to create digitally enhanced training missions for soldiers. It's become such a prevalent concept that the army's given one program an official name, Synthetic Training Environment, or STE. Wearable AR glasses and headsets may well help futuristic armies process digital information at incredible speeds, helping commanders make better battlefield decisions on the fly. There are fascinating business benefits, too. The Gatwick passenger app, for example helps travelers navigate the insanity of a packed airport using its AR app.

The possibilities of augmented reality solutions are limitless. The only uncertainty is how smoothly, and quickly, developers will integrate these capabilities into devices that we'll use on a daily basis.

Advertisement